=Overview

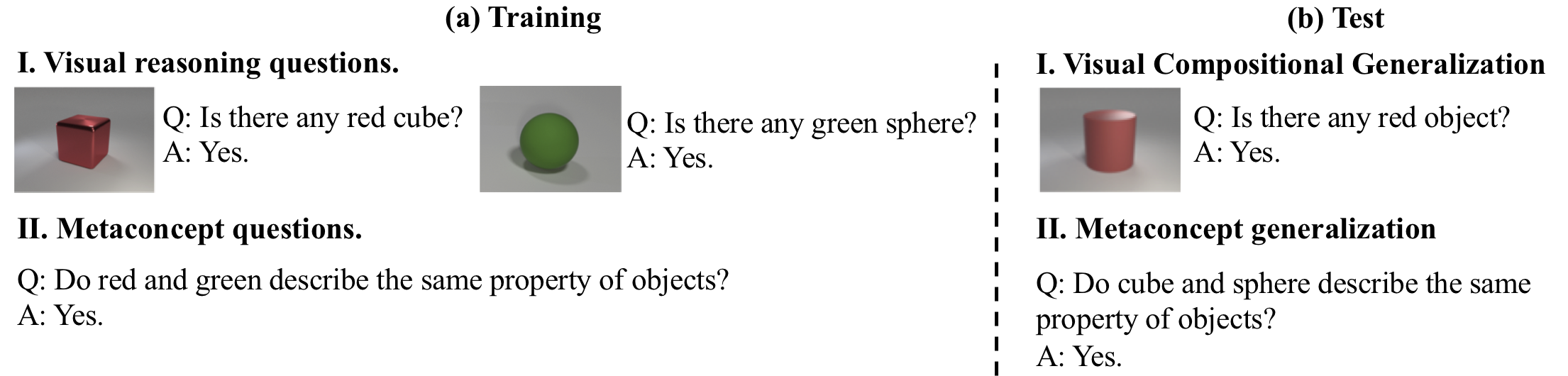

Figure 1: The visual concept-metaconcept learner learns concepts and metaconcepts from images and two types of questions. The learned knowledge helps visual concept learning (generalizing to unseen visual concept compositions, or to concepts with limited visual data) and metaconcept generalization (generalizing to relations between unseen pairs of concepts..

Humans reason with concepts and metaconcepts: we recognize red and green from visual input; we also understand that they describe the same property of objects (i.e., the color). In this paper, we propose the visual concept-metaconcept learner (VCML) for joint learning of concepts and metaconcepts from images and associated question-answer pairs. The key is to exploit the bidirectional connection between visual concepts and metaconcepts. Visual representations provide grounding cues for predicting relations between unseen pairs of concepts. Knowing that red and green describe the same property of objects, we generalize to the fact that cube and sphere also describe the same property of objects, since they both categorize the shape of objects. Meanwhile, knowledge about metaconcepts empowers visual concept learning from limited, noisy, and even biased data. From just a few examples of purple cubes we can understand a new color purple, which resembles the hue of the cubes instead of the shape of them. Evaluation on both synthetic and real-world datasets validates our claims.

=Resources

- VCML model in [PyTorch (Official)].

- Augmented datasets (CLEVR, GQA, and CUB). Details could be found at here.

=Related Publications

The Neuro-Symbolic Concept Learner: Interpreting Scenes, Words, and Sentences From Natural Supervision

- ICLR 2019 (Oral)

- Paper /

- Project Page /

- BibTeX

Neural-Symbolic VQA: Disentangling Reasoning from Vision and Language Understanding

- NeurIPS 2018 (Spotlight)

- Paper /

- Project Page /

- BibTeX